With the help of you all and outside sources I'm finally starting to make sense of my first drag test datalogs. And it's not necessarily working how one would logically think. Typically, you'd think that more voltage would also require more current and more power to get more RPM (and therefore boost). But it seems that less voltage is requiring more current and wattage to produce LESS rpm and and boost.

Let me explain. Since we had the "happy accident" on our second pass of leaving hard enough off the transbrake that one of my packs disconnected itself; basically leaving us with 1 16s pack instead of two 16s packs in parallel, that gave us two sets of data points to work off of. Now, I realize that the second pass was much quicker (ET wise) but not much different speed wise (MPH); that's mostly due to leaving on less boost on the first pass and more wheelspin.

First, let's look at the EFI datalogs so you can see what I mean.

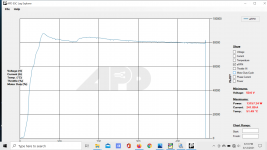

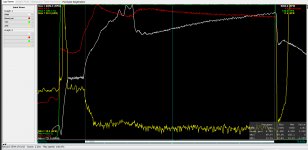

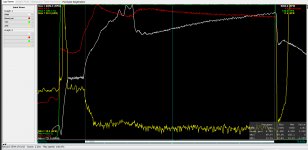

First pass (2x 16s packs in parallel):

The white trace is engine rpm, the red trace is boost and the green trace is throttle position. You can see the rather large amount of wheelspin at the top of first gear and in the bottom of second (which, with a power glide is more like 2nd or 3rd gear in a "normal" car; and on slicks no less) - if there was no wheelspin, that trace would be smooth up to the shift. That accounts for the loss in ET, but shows we're making a good bit of power. But you can see that peak boost is 6 psi and average boost is 4.765; even though we left the line at only 2.4 psi.

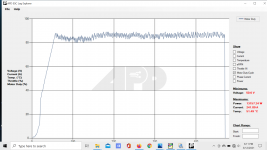

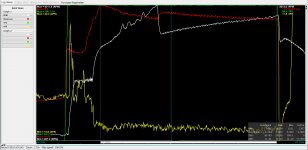

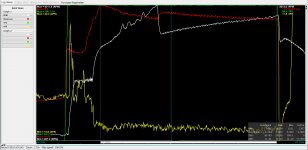

Now let's look at the second datalog:

Here you see much less wheel spin in 1st gear, and less boost - peak of 5.9 and average of 4.514; even though we left the line at almost peak boost (it was probably closer to 4 psi, but still much more than the 1st pass - again, giving us almost a HALF SECOND improvement in ET, even though we were actually making less power. This is the pass where we had a battery pack become disconnected on the launch.

Now on to the interesting bit... I'll put it in the following post just to keep things manageable.

Let me explain. Since we had the "happy accident" on our second pass of leaving hard enough off the transbrake that one of my packs disconnected itself; basically leaving us with 1 16s pack instead of two 16s packs in parallel, that gave us two sets of data points to work off of. Now, I realize that the second pass was much quicker (ET wise) but not much different speed wise (MPH); that's mostly due to leaving on less boost on the first pass and more wheelspin.

First, let's look at the EFI datalogs so you can see what I mean.

First pass (2x 16s packs in parallel):

The white trace is engine rpm, the red trace is boost and the green trace is throttle position. You can see the rather large amount of wheelspin at the top of first gear and in the bottom of second (which, with a power glide is more like 2nd or 3rd gear in a "normal" car; and on slicks no less) - if there was no wheelspin, that trace would be smooth up to the shift. That accounts for the loss in ET, but shows we're making a good bit of power. But you can see that peak boost is 6 psi and average boost is 4.765; even though we left the line at only 2.4 psi.

Now let's look at the second datalog:

Here you see much less wheel spin in 1st gear, and less boost - peak of 5.9 and average of 4.514; even though we left the line at almost peak boost (it was probably closer to 4 psi, but still much more than the 1st pass - again, giving us almost a HALF SECOND improvement in ET, even though we were actually making less power. This is the pass where we had a battery pack become disconnected on the launch.

Now on to the interesting bit... I'll put it in the following post just to keep things manageable.

Last edited: